Life (and the Pursuit of Happiness)– But for How Long?

Confronting our mortality is not an easy task for most people. However, there are many reasons people should consider just how long they might live.

In the case of retirement planning, life expectancy is important in relation to the benefits like Medicare and Social Security that people earn during their working years. According to the Pew Research Center, use of these government benefits programs is “virtually universal (97%) among those ages 65 and older—the age at which most adults qualify for Social Security and Medicare benefits.”

Differences in life expectancy between the rich and the poor can mean that more affluent Americans receive hundreds of thousands of dollars more in benefits that those who are less well off. In addition to the economic considerations, there is a psychological and perhaps even moral factor that comes into play when thinking about how long we might expect to live in relation to our financial status.

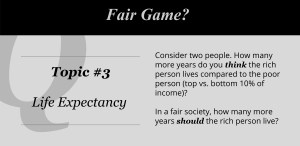

Topic 3 in Fair Game? asked users to think about exactly this question and to estimate how income is related to life expectancy and what that relationship should be in a fair world.

“It will surprise nobody to learn that life expectancy increases with income.”— Michael Specter, 4/16/16, The New Yorker

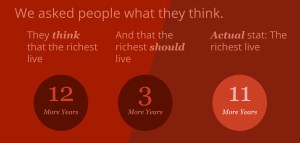

On the whole, our users estimated that the richest 10% of society would have a life expectancy 12 years longer than the poorest 10%. In reality, the difference is 11 years (12 additional years for men and 10.1 additional years for women).

How many more years of life do they think the wealthy should expect in a fair world? 3 more years (a 75% reduction from their estimate of what is true).

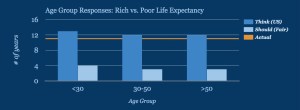

Estimates for the current difference in life expectancy were remarkably similar across all age groups, as were our users’ beliefs about what would be fair. Preferences for a fair difference were also similar. There may be hints of a difference between younger and older adults, with a possible explanation simply being ‘mortality salience’, or how much longer users themselves’ expect to live.

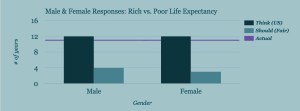

What about gender? Both males and females estimate 12 more years of life for the top 10%. But they differ slightly in what they think a fair amount of additional years would be — 4 vs. 3, respectively.

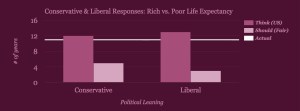

And political leaning? People who identify as conservative and those who identify as liberal only differed by 1 year in terms of what they believed the life expectancy gain from wealth to be, and 2 years in what they thought it should be in a fair world. Conservatives estimate the gain to be 12 years, while liberals put it at 13 years. In terms of what they think would happen in a fair society, conservatives consider 5 years and liberals consider 3 years to be a fair number of years gained with wealth. Despite these small differences, the presumed improvement (from what is thought to what it should be) ends up being 7 years for conservatives vs. 10 years for liberals.

Together, the data reported here suggest that estimations of the present gap in life expectancy are fairly accurate. And that small differences in life expectancy (3-5 years) are considered fair (or perhaps tolerable) by most people. Has the wealth-based life expectancy gap always been this large? According to a Brookings report, it has actually grown from 4.5 years (for the cohort of seniors born in 1920) to 11 years (for the cohort born in 1940). It is likely that multiple factors, including differential access to quality medical care, and higher rates of smoking and obesity, contribute to the growth of this gap. On top of all of this, wealthier people typically retire later and can delay receiving social security payments, thereby increasing income inequality in the older population.

Unfortunately, public benefits that were originally intended to be progressive seem to be becoming (unintentionally) regressive over time. Perhaps there are some solutions that might help to keep more Americans living long, happy, and healthy lives?

What do you think? Please join us and play along by downloading Fair Game? from iTunes or Google Play.

Economics and the maximization of profit (and lies).

When a friend sent me this paper the other day, I admit that I took a long hard look at myself and my economist friends. According to this study, economists, it seems, are worse than most when it comes to truth telling. This discovery was made by researchers Raúl López-Pérez and Eli Spiegelman, who wanted to examine whether certain characteristics (for instance religiosity or gender) made people averse to lying. They measured the preference for honesty by canceling out other motivations, such as altruism or fear of getting caught.

The way they accomplished this was with a very simple experiment where a pair of participants acted as sender and receiver of information. The sender would sit alone in front of a screen that showed either a blue or green circle. He or she would then communicate the circle’s color to the receiver, who could not see the color or the sender. Senders received 15 Euros every time they indicated a green circle, and only 14 when they communicated that the circle was blue. Receivers earned an even 10 euros regardless of the color, and so were unaffected by either the truthfulness or dishonesty of the senders.

So senders had four strategies:

1) Tell the truth when shown a green circle and get the maximum payment;

2) Lie when shown a green circle, choosing a lower payment;

3) Tell the truth when shown a blue circle and receive the lower payment;

4) Lie when shown a blue circle and gain an extra euro.

All was well and good if senders saw a green circle, telling the truth earned them the maximum amount of cash (as you can imagine, option 2) was fairly unpopular). What if they saw blue though? Well, they had two options: tell the truth and lose a euro, or lie and get paid more. The experimenters reasoned that a lie-averse sender would always communicate the circle’s color accurately while senders motivated by maximizing profit would indicate green regardless.

Participants, who were from a wide array of socio-economic and religious backgrounds, also came from a range of majors. Researchers grouped majors together into business and economics, humanities, and other (science, engineering, psych). The results showed little difference in honesty as a factor of socio-demographic characteristics or gender. A student’s major, however, was a different story. As it turned out, those in the humanities, who were the most honest of all, told the perfect truth a little over half the time. The broad group of “other” was a bit less honest with around 40% straight shooters. And how about the business and economics group? They scraped the bottom with a 23% rate of honesty.

Keep in mind that this was one study of one group of people; however, it does indicate that the study of economics makes people less likely to tell the truth for its own sake. And this holds water, economically speaking: 1 euro has clear and measurable value, it can be exchanged for a number of things. The benefit of telling the truth in this situation does not carry any financial value (which is not to say lying in finance is not costly—clearly it is). But rationalization, which we all take part in, may be easier for those who think in terms of opportunity cost and percent profit.

This is not terribly surprising to me in the context of the greater history of economics, which has been characterized by the study of selfishness. The concept of the invisible hand (inherent in the notion of self-correcting markets) holds that people should act selfishly (maximizing their own profits) and that the market will combine all of their actions with an efficient outcome. While it’s true that markets can sometimes accommodate a range of behaviors without failing, if we continue to teach students the benefits and logicality of rational self-interest, what can we really expect?

Real-world Endowment

One of economists’ common critiques of the study of behavioral economics is the reliance on college students as a subject pool. The argument is that this population’s lack of real-world experience (like paying taxes, investing in stock, buying a house) makes them another kind of people, one that conceptualizes their decisions in altogether different ways. And although many decision-making studies in behavioral economics have shown that young adults do not act much differently than adult adults when it comes down to their core behavior (think of MDs whose diagnoses are influenced by defaults and the framing of choices, for example), the argument persists as a sweeping dismissal of using students as the main testing ground.

One area where we can test this assumption is with the endowment effect. Simply put, the endowment effect shows that we value the things we own more than identical products that we don’t own. This causes a mismatch between buyers and sellers, where buyers are often willing to spend less than the seller deems an acceptable price.

If we are to assume that consumers hold constant, well-defined preferences, this puts the stability of valuations into question. As such, the endowment effect has puzzled economists for quite some time because in principle, valuations should not be affected by ownership; if a purple hat is worth $15 to you, it should be worth $15 to you whether or not you have purchased it, and this value should remain consistent both before and after you purchase it.

Let’s say that undergraduate A receives a mug and is asked how much money she would require to sell it to undergraduate B. Studies find that undergraduate A will have a much harder time parting with the very same mug that undergraduate B has no attachment to. Now, these students don’t have much experience with real-world markets. So the question is — would those who do have experience in these markets behave differently than their inexperienced undergraduate counterparts?

In his senior research paper, Sean Tamm studied exactly this*. He approached 30 car salespeople and 46 realtors, a population that presumably has much experience with negotiating their maximum willingness to accept (when selling items), as well as with a maximum willingness to pay (when purchasing items). He endowed half of these participants with mugs, and asked the sellers what it would take to sell the mugs and the buyers what it would take to buy the mugs. And despite the extensive real-world market experience of these participants, willingness to accept was about three times higher than willingness to pay, demonstrating that even expert negotiators are susceptible to the endowment effect. This is consistent with previous research, showing an overvaluation of owned goods of about 2.5 times that of unowned goods.

This is just one more example of real-world experience not playing the protective role that we often assume comes with experience. It also suggests that our brains and the way we make decisions are similar, and that for the most part, students are operating under the same constraints as those with much more experience. In the end, we may just have to accept that students are real people (most of the time).

*“Can Real Market Experience Eliminate the Endowment Effect?” by Sean Tamm, Stetson University

Ideas from Literature

Not only do I find examples of behavioral economics in literature (see this recent post), sometimes I get research ideas from it. This passage from Wallace Stegner’s Angle of Repose was one such instance:

Touch. It is touch that is the deadliest enemy of chastity, loyalty, monogamy, gentility with its codes and conventions and restraints. By touch we are betrayed and betray others… an accidental brushing of shoulders or touching of hands… hands laid on shoulders in a gesture of comfort that lies like a thief, that takes, not gives, that wants, not offers, that awakes, not pacifies. When one flesh is waiting, there is electricity in the merest contact.

We already know that touch can change our behavior, for instance, holding something for a few seconds makes us much more likely to buy it. But what about how touch, as slight as described here, changes interpersonal dynamics? How exactly might I test this idea? What might an experiment look like? And could I possibly get approval for it from the Institutional Review Board? More on this soon, I hope…

Squash and Our Intuitive Strategic Thinking

As you may or may not know, I am a fairly avid squash player (not so good but I love the game). One of my favorite aspects of squash, aside from being a great source of exercise, is the strategic thinking required.

The other day while playing, I had a slight itch to change strategies. It wasn’t a conscious, logical process, and it was more like a kind of the desire to itch or pick a scab. Like picking a scab, my better judgment told me that making this switch would be a bad idea, but the urge was too great, and I switched my playing style. And it worked!

After the game I wondered, where does this itch come from? Is it part of our creative instinct for exploring and trying out new strategies? Do we have a desire to try new things?

A Fun New iPhone app: At a boy!

A few months ago, I had the idea to create an iPhone app that would give me (us) compliments. It turns out that as humans, not only are we sensitive to rude remarks from strangers, but we are also very excited when we get kind words, even if they are just random; they just make us feel much better, even if these strangers don’t know us very well.

At a boy! is a completely free app, and you can find it here.

HOW TO USE: when you open the app you get a compliment and if you want a new one simply tap the screen. To get a new compliment, simply tap the screen. I do want to encourage you to use the thumbs up/down to let me know which compliments make you feel the best — this way we will be able to figure out what kinds of compliments work better and worse.

Most important, users of At a boy! can submit their own compliments for other users to read: just tap the pen icon and type one in.

Here, for example, is a compliment that a French-speaking user of our app submitted (if you can please submit compliments also in English):

Doesn’t that make you feel good to read? We’ve had a few dozen great compliments already submitted, and we could always use more!

By the way, did I tell you that you look very nice today and that you are very clever?

Irrationally yours

Dan

Why Businesses Don’t Experiment

A few years ago, a marketing team from a major consumer goods company came to my lab eager to test some new pricing mechanisms using principles of behavioral economics. We decided to start by testing the allure of “free,” a subject my students and I had been studying. I was excited: The company would gain insights into its customers’ decision making, and we’d get useful data for our academic work. The team agreed to create multiple websites with different offers and pricing and then observe how each worked out in terms of appeal, orders, and revenue.

Several months later, right before we were due to go live, we had a meeting about the final details of the experiment—this time with a bigger entourage from marketing. One of the new members noted that because we were extending differing offers, some customers might buy a product that was not ideal for them, spend too much money, or get a worse deal overall than others. He was correct, of course. In any experiment, someone gets the short end of the stick. Take clinical medical trials, I said to the team. When testing chemotherapy treatments, some patients suffer more so that, down the road, others might suffer less. I hoped this put it in perspective. Fortunately, I said, price testing household products requires far less suffering than chemo trials.

But I could tell I was losing them. In a sense, I was impressed. It was a beautiful human sentiment they were conveying: We care about all customers and don’t want to treat any one of them unfairly. A debate ensued among the group: Are we willing to sacrifice some customers “just” to learn how the new pricing approaches work?

They hedged. They asked me what I thought the best approach was. I told them that I was willing to share my intuition but that intuition is a remarkably bad thing to rely on. Only an experiment gives you the evidence you need. In the end, it wasn’t enough to convince them, and they called off the project.

This is a typical case, I’ve found. I’ve often tried to help companies do experiments, and usually I fail spectacularly. I remember one company that was having trouble getting its bonuses right. I suggested they do some experiments, or at least a survey. The HR staff said no, it was a miserable time in the company. Everyone was unhappy, and management didn’t want to add to the trouble by messing with people’s bonuses merely for the sake of learning. But the employees are already unhappy, I thought, and the experiments would have provided evidence for how to make them less so in the years to come. How is that a bad idea?

Companies pay amazing amounts of money to get answers from consultants with overdeveloped confidence in their own intuition. Managers rely on focus groups—a dozen people riffing on something they know little about—to set strategies. And yet, companies won’t experiment to find evidence of the right way forward.

I think this irrational behavior stems from two sources. One is the nature of experiments themselves. As the people at the consumer goods firm pointed out, experiments require short-term losses for long-term gains. Companies (and people) are notoriously bad at making those trade-offs. Second, there’s the false sense of security that heeding experts provides. When we pay consultants, we get an answer from them and not a list of experiments to conduct. We tend to value answers over questions because answers allow us to take action, while questions mean that we need to keep thinking. Never mind that asking good questions and gathering evidence usually guides us to better answers.

Despite the fact that it goes against how business works, experimentation is making headway at some companies. Scott Cook, the founder of Intuit, tells me he’s trying to create a culture of experimentation in which failing is perfectly fine. Whatever happens, he tells his staff, you’re doing right because you’ve created evidence, which is better than anyone’s intuition. He says the organization is buzzing with experiments.

And so is that consumer goods company. A group there is studying consumer psychology and behavioral economics and is amassing evidence that’s impressive by any academic standard. Years after our false start, they’re recognizing the dangers of relying on intuition.

Creating God in Our Own Image

Question: what are God’s views on affirmative action, the death penalty and same-sex marriage? Answer: whatever you want them to be.

That’s according to a recent study by Nicholas Epley, Benjamin Converse, Alexa Delbosc, George Monteleone and John Cacioppo, found that we tend to ascribe our own views to God.

Past studies have shown that when we reason about other people, we form an opinion of their views based on two sources: egocentric info (i.e., what we ourselves believe) and outside clues (what the other person has said and done, and what others have said about them).

Here, the researchers wanted to find out how much we rely on egocentric info to construe other people’s views, including God’s. To that end, they had devout American participants provide their personal views on various issues (abortion, death penalty, Iraq war, etc.), as well as what they thought were the views of others (Katie Couric, George Bush, the average American, God, etc.).

When the researchers compared participants’ personal views with the participants’ estimates of others’ views, they found one significant pattern: there was a correlation between participants’ personal views and their estimates of God’s view. For example, participants who said they were for same-sex marriage tended to also say that God was for same-sex marriage. And participants who said they were against same-sex marriage tended to also say that God was against same-sex marriage.

But this wasn’t the case for the other figures – Couric, Bush, average American, and so forth. Participants who said they were for same-sex marriage were statistically neither more nor less likely to say that Couric was for same-sex marriage than those who held the opposite view. In other words, what I say Couric thinks has nothing to do with what I myself think. But what I say God thinks has lots to do with what I myself think.

But correlation doesn’t imply causation, so to shed light on the direction of causality, the researchers ran two follow-up experiments. This time, instead of just surveying participants for current views, they induced participants to change their personal views by randomly assigning them to give speeches for or against the issue (death penalty) in front of a camera. Because it was random assignment, some people ended up arguing for their personal view, while others argued against it (many past studies have shown that in this context, people tend to shift their own opinions in a direction consistent with the speech they delivered). So, what about the other views (God’s, Couric’s etc.) – would the participant revise those as well?

Yes and no. The only other view that changed was God’s. As participants’ own views changed, so did their estimates of God’s view. The participant who started out very much for the death penalty but took on a more moderate view after arguing against the death penalty on camera also ascribed a more moderate view to God. But his estimates of the others’ views remained unchanged.

Overall these results suggest that God is a blank slate onto which we project whatever we choose to. We join religious communities that argue for our viewpoint and we interpret religious readings to support our personal positions.

Irrationally Yours,

Dan

p.s and happy birthday to my little sister Tali

The Long-Term Effects of Short-Term Emotions

The heat of the moment is a powerful, dangerous thing. We all know this. If we’re happy, we may be overly generous. Maybe we leave a big tip, or buy a boat. If we’re irritated, we may snap. Maybe we rifle off that nasty e-mail to the boss, or punch someone. And for that fleeting second, we feel great. But the regret—and the consequences of that decision—may last years, a whole career, or even a lifetime.

At least the regret will serve us well, right? Lesson learned—maybe.

Maybe not. My friend Eduardo Andrade and I wondered if emotions could influence how people make decisions even after the heat or anxiety or exhilaration wears off. We suspected they could. As research going back to Festinger’s cognitive dissonance theory suggests, the problem with emotional decisions is that our actions loom larger than the conditions under which the decisions were made. When we confront a situation, our mind looks for a precedent among past actions without regard to whether a decision was made in emotional or unemotional circumstances. Which means we end up repeating our mistakes, even after we’ve cooled off.

I said that Eduardo and I wondered if past emotions influence future actions, but, really, we worried about it. If we were right, and recklessly poor emotional decisions guide later “rational” moments, well, then, we’re not terribly sophisticated decision makers, are we?

To test the idea, we needed to observe some emotional decisions. So we annoyed some people, by showing them a five-minute clip from the movie Life as a House, in which an arrogant boss fires an architect who proceeds to smash the firm’s models. We made other subjects happy, by showing them—what else?—a clip from the TV show Friends. (Eduardo’s previous research had established the emotional effects of these clips).

Right after that, we had them play a classic economics game known as the ultimatum game, in which a “sender” (in this case, Eduardo and I) has $20 and offers a “receiver” (the movie watcher) a portion of the money. Some offers are fair (an even split) and some are unfair (you get $5, we get $15). The receiver can either accept or reject the offer. If he rejects it, both sides get nothing.

Traditional economics predicts that people—as rational beings—will accept any offer of money rather than reject an offer and get zero. But behavioral economics shows that people often prefer to lose money in order to punish a person making an unfair offer.

Our findings (published in Organizational Behavior and Human Decision Processes) followed suit, and, interestingly, the effect was amplified among our irritated subjects. Life as a House watchers rejected far more offers than Friends watchers, even though the content of the movie had nothing to do with the offer. Just as a fight at home may sour your mood, increasing the chances that you’ll send a snippy e-mail, being subjected to an annoying movie leads people to reject unfair offers more frequently even though the offer wasn’t the cause of their mood.

Next came the important part. We waited. And when the emotions evoked by the movie were no longer a factor, we had the participants play the game again. Our fears were confirmed. Those who had been annoyed the first time they played the game rejected far more offers this time as well. They were tapping the memory of the decisions they had made earlier, when they were responding under the influence of feeling annoyed. In other words, the tendency to reject offers remained heightened among our Life as a House group—compared with control groups—even when they were no longer irritated.

So now I’m thinking of the manager whose personal portfolio loses 10% of its value in a week (entirely plausible these days). He’s frustrated, angry, nervous—and all the while, he’s making decisions about the day-to-day operations of his group. If he’s forced to attend to those issues right after he looks at his portfolio, he’s liable to make poor decisions, colored by his inner turmoil. Worse, though, those poor decisions become part of the blueprint for his future decisions—part of what his brain considers “the way to act.”

That makes those strategies for making decisions in the heat of the moment even more important: Take a deep breath. Count backward from 10 (or 10,000). Wait until you’ve cooled off. Sleep on it.

If you don’t, you may regret it. Many times over.

Monkeys like to mix it up

DUKE (US)—Given a choice between spending a token to get their absolute favorite food or spending it to have a choice from a buffet of options, capuchin monkeys will opt for variety.

In fact, they’ll even eat a less-preferred food from that buffet when the favorite food is on it. They choose variety for variety’s sake.

The choices made by these captive-bred monkeys in an Italian research facility seem to show some innate desire to seek variety, says Dan Ariely, the James B. Duke Professor of psychology and behavioral economics at Duke University.

In a series of experiments Ariely conducted with colleagues at the Istituto di Scienze e Tecnologie della Cognizione in Rome, the eight monkeys first had to be taught that the abstract tokens, such as poker chips, plastic cylinders and metal nuts, represented different kinds of choice. With training, the tokens were associated with being able to buy one piece of the most-preferred food, or being able to buy one piece from an assortment of foods that included the most-preferred food.

Lead author Elsa Addessi has used this token method before with this troop of capuchins, who are on public display as well as being used in non-invasive cognitive experiments.

“Economically, the tokens should be equivalent, because they both give you the food you like,” Ariely says.

But once they had the hang of it, the monkeys as a group chose to use the variety tokens and not the “single-food-tokens.” Moreover, once they chose the variety tokens the monkeys also didn’t always take the most-preferred food when it was offered as part of the variety assortment. What this means is that they prefer variety for variety’s sake and are willing to eat food they like less to satisfy their desire for variety.

The work appears online in Behavioural Processes.

The implications of this simple experiment shed some light on consumer behavior, Ariely says. Earlier work on variety-seeking has found that people eat 43 percent more M&M candies when there are 10 colors in the bowl instead of just seven.

“People choose variety for variety’s sake,” Ariely says. “They often choose things they don’t even like as well just for the variety. We knew about this, so the interesting thing was to figure out how basic it is.”

The behavior of the capuchins, which are native to South America, “suggest that there’s some inherent basic strategy for variety,” Ariely explains. In the wild, variety seeking may help ensure a nutritionally varied diet. It is also possible, the authors suggest, that variety-seeking contributed to the rise of bartering and then abstract money in human society.

At the same time, Ariely is somewhat puzzled that humans can get stuck in a rut and not seek more variety. “Ask yourself: How many new things have you tried lately? Have you tried every cereal in the cereal aisle?” It may be that you’re enjoying a daily bowl of a cereal that you would rate as an 8, when just a few feet away on the shelf there is a cereal you’d rate as a 9, but you’ve never tried it.

Businesses can push variety on customers with assortment packs, Ariely suggests, and vicarious experiences like the Food Network can encourage exploration as well.

“How do we get ourselves to explore? Even monkeys do it—so maybe we should also try more variety.”

The research was supported by the Istituto di Scienze e Tecnologie della Cognizione and Duke.

Tweet

Tweet  Like

Like